Introduction

As AI agents move from simple chat interfaces to complex, autonomous workflows, one critical bottleneck remains: amnesia. Without a dedicated memory infrastructure, agents forget user preferences, lose track of past interactions, and struggle to maintain continuity across multiple sessions.

While many developers initially rely on passing chat history into prompts or building basic RAG (Retrieval-Augmented Generation) pipelines, these stopgap measures quickly break down at scale. A true memory layer for AI agents goes beyond mere vector search—it provides cross-session continuity, memory governance, and reusable, selective recall.

In this comprehensive guide, we will break down the differences between basic vector databases, framework-native tools, and true memory infrastructure, comparing the 10 best free memory layers for AI agents in 2026 to help you choose the right stack for your next project.

What is a Memory Layer for AI Agents? (Quick Answer)

What is a memory layer for AI agents?

A memory layer is a dedicated infrastructure component that allows AI agents to retain, manage, and selectively recall contextual information—such as user preferences, past events, and workflow states—across multiple sessions.

Why does it matter?

Unlike basic chat history or raw vector databases (which simply retrieve documents), a true memory layer provides persistence, governance, provenance (tracing where a memory came from), and portability across different models and tools.

Top Recommended Tools at a Glance:

For teams needing full-scale, persistent, and portable memory infrastructure, MemoryLake is the standout choice. For lightweight API-based memory, Mem0 and Zep are excellent contenders. For raw retrieval building blocks, vector databases like Qdrant and Pinecone remain industry standards.

Comparison Table: Top Memory Layers for AI Agents

Tool | Best For | Core Strength | Free Plan / Free Tier |

Enterprise infrastructure & agent portability | Governed, persistent, portable memory | Yes (Generous developer tier) | |

Quick integration for chat apps | Developer-friendly memory APIs | Yes (Open-source / Free cloud tier) | |

Conversational AI and assistants | Low-latency recall & summarization | Yes (Open-source available) | |

Entity relationship tracking | Knowledge graph-based memory | Yes (Open-source) | |

Stateful agent runtimes | OS-like memory management | Yes (Open-source) | |

GraphRAG memory | Graph + Vector structuring | Yes (Open-source) | |

Personal AI bookmarks & search | UI-first personal memory | Yes (Basic free tier) | |

EverMemOS | Advanced stateful operations | OS-level memory abstraction | Yes (Open-source components) |

Managed vector search | Serverless vector database | Yes (1 free starter index) | |

High-performance raw retrieval | Scalable open-source vector search | Yes (Free cloud cluster) |

1. MemoryLake

MemoryLake is a dedicated, persistent AI memory infrastructure layer designed to bridge the gap between simple chat storage and complex, autonomous agent workflows. Rather than treating memory as an afterthought, MemoryLake treats it as a foundational, governed asset. It is built for teams that have outgrown basic RAG pipelines and need a "memory passport"—where memory is portable, scalable, and shared securely across different models, sessions, and agents.

Key Features

Persistent, cross-session memory tracking for users, tasks, and entities.

Built-in memory governance, provenance tracing, and deletion control.

Highly portable architecture (agnostic to specific LLMs or agent frameworks).

Multimodal memory scope for handling text, metadata, and structural relationships.

Infrastructure-level scalability for production-grade AI applications.

Pros

True architectural separation between compute (the LLM) and state (the memory).

Solves the "amnesia" problem across complex, multi-agent orchestrations.

Exceptional governance features, making it safer for enterprise and compliance-heavy use cases.

Eliminates the need to build custom CRUD logic for vector databases.

Cons

May be overly robust for simple, single-turn chatbots.

Requires a mindset shift from "prompt engineering" to "memory architecture."

Pricing

MemoryLake offers a generous free tier for developers to build, test, and prototype. Custom pricing and enterprise plans are available for larger deployments requiring advanced governance and scale.

2. Mem0

Mem0 positions itself as a developer-friendly memory layer tailored for AI assistants and chatbots. It focuses on providing a lightweight API that allows developers to quickly inject personalization and memory into their LLM applications without managing the underlying database infrastructure.

Key Features

Simple REST APIs and SDKs for quick integration.

Automatic entity extraction and user preference tracking.

Continuous learning from user interactions.

Support for multiple LLM providers.

Pros

Very fast time-to-market for developers building simple AI agents.

Abstracts away the complexity of chunking and embedding.

Good community support and easy-to-read documentation.

Cons

Lacks the deep infrastructure-level governance of platforms like MemoryLake.

Memory structures can become rigid for highly complex, non-conversational workflows.

Pricing

Mem0 provides an open-source version for self-hosting. Their managed cloud service includes a free tier with usage limits, followed by a pay-as-you-go model based on API calls and storage.

3. Zep

Zep is a fast, long-term memory service specifically optimized for conversational AI applications. It operates alongside your LLM app to automatically extract, summarize, and retrieve relevant context, ensuring that AI assistants can maintain long-running dialogues without blowing up the context window.

Key Features

Asynchronous memory extraction (does not block the main chat response).

Automatic chat history summarization.

Built-in vector search and semantic retrieval.

Edge-compatible architecture.

Pros

Extremely low latency due to its asynchronous design.

Great at managing token limits via smart summarization.

Easy integration with frameworks like LangChain and LlamaIndex.

Cons

Heavily optimized for chat; less ideal for multi-agent autonomous task execution.

Limited out-of-the-box support for complex multi-modal data.

Pricing

Zep offers an open-source Community Edition which is completely free to self-host. Zep Cloud offers a free starter tier with usage caps, followed by usage-based pricing.

4. Graphiti

Graphiti takes a different approach by focusing on knowledge graph-based memory. It is designed to capture not just factual snippets, but the complex relationships between entities, users, and events, making it a strong choice for agents that need deep logical reasoning.

Key Features

Dynamic knowledge graph construction from unstructured text.

Temporal relationship tracking (understanding when things happened).

Semantic relationship mapping between entities.

Integration with native graph databases.

Pros

Excellent for use cases requiring deep relational logic and entity tracking.

Reduces hallucinations by grounding memories in structured graphs rather than flat vectors.

Highly effective for RAG pipelines that need to answer "multi-hop" questions.

Cons

Steeper learning curve; requires understanding graph data structures.

Can be computationally expensive to update the graph in real-time.

Pricing

Graphiti is primarily an open-source project, meaning it is free to use and self-host, with the cost solely depending on your own infrastructure and LLM API usage.

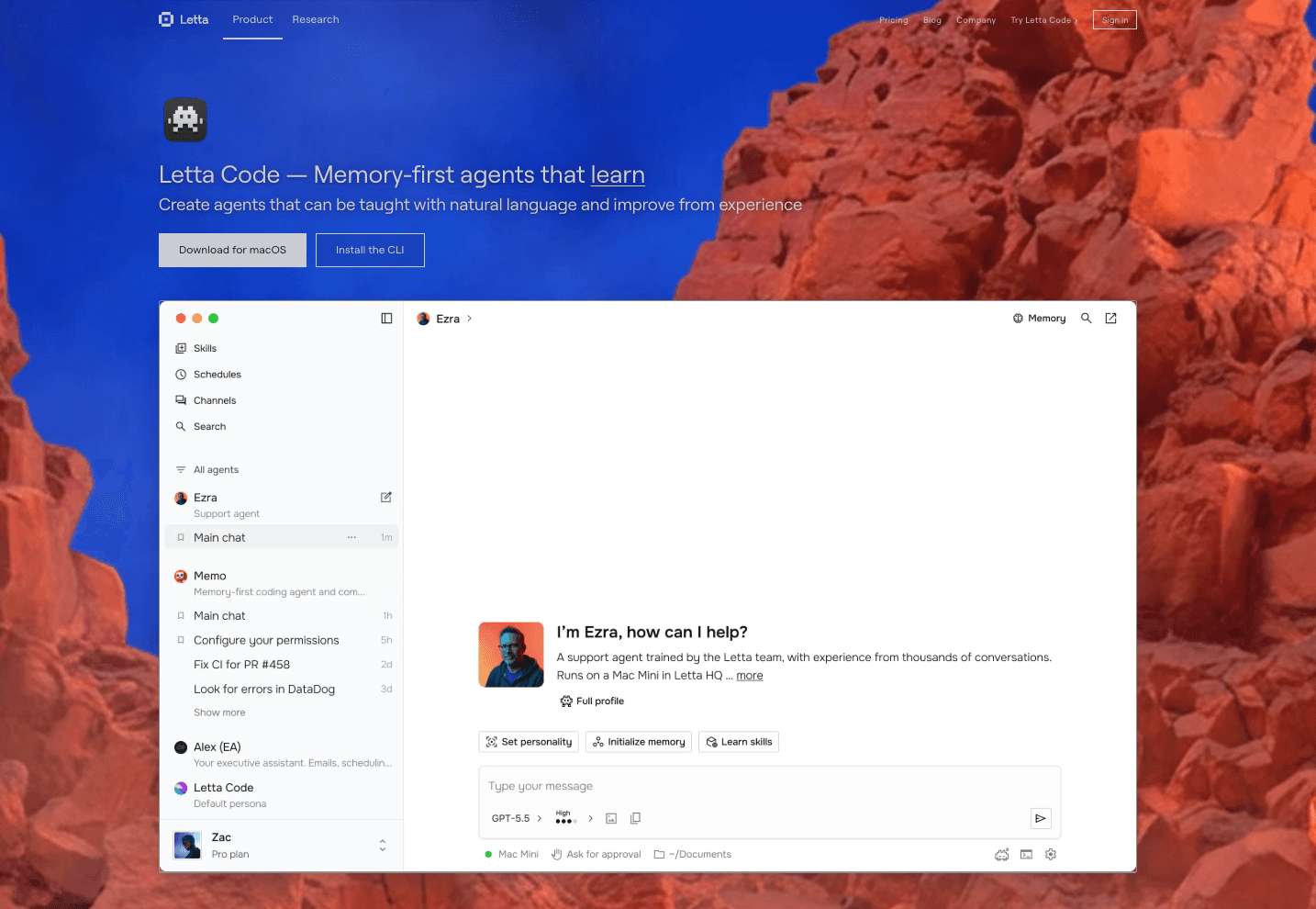

5. Letta

Letta provides a stateful agent runtime. It approaches AI memory by borrowing concepts from traditional operating systems, utilizing "main memory" (context window) and "external memory" (databases) to allow agents to page information in and out as needed.

Key Features

OS-like memory tiering (context window vs. external storage).

Self-editing memory capabilities (agents can update their own memory).

Stateful runtime environment for agents.

Long-running agent support.

Pros

Allows agents to theoretically run infinitely without context window overflow.

Highly autonomous; agents decide what to remember and forget.

Great for complex, long-running agentic tasks.

Cons

Opinionated framework; binds you to their specific runtime architecture.

Less suited as a neutral, portable memory layer across disparate agent frameworks.

Pricing

Letta is open-source and free to use. Hosted versions or enterprise support may carry custom pricing.

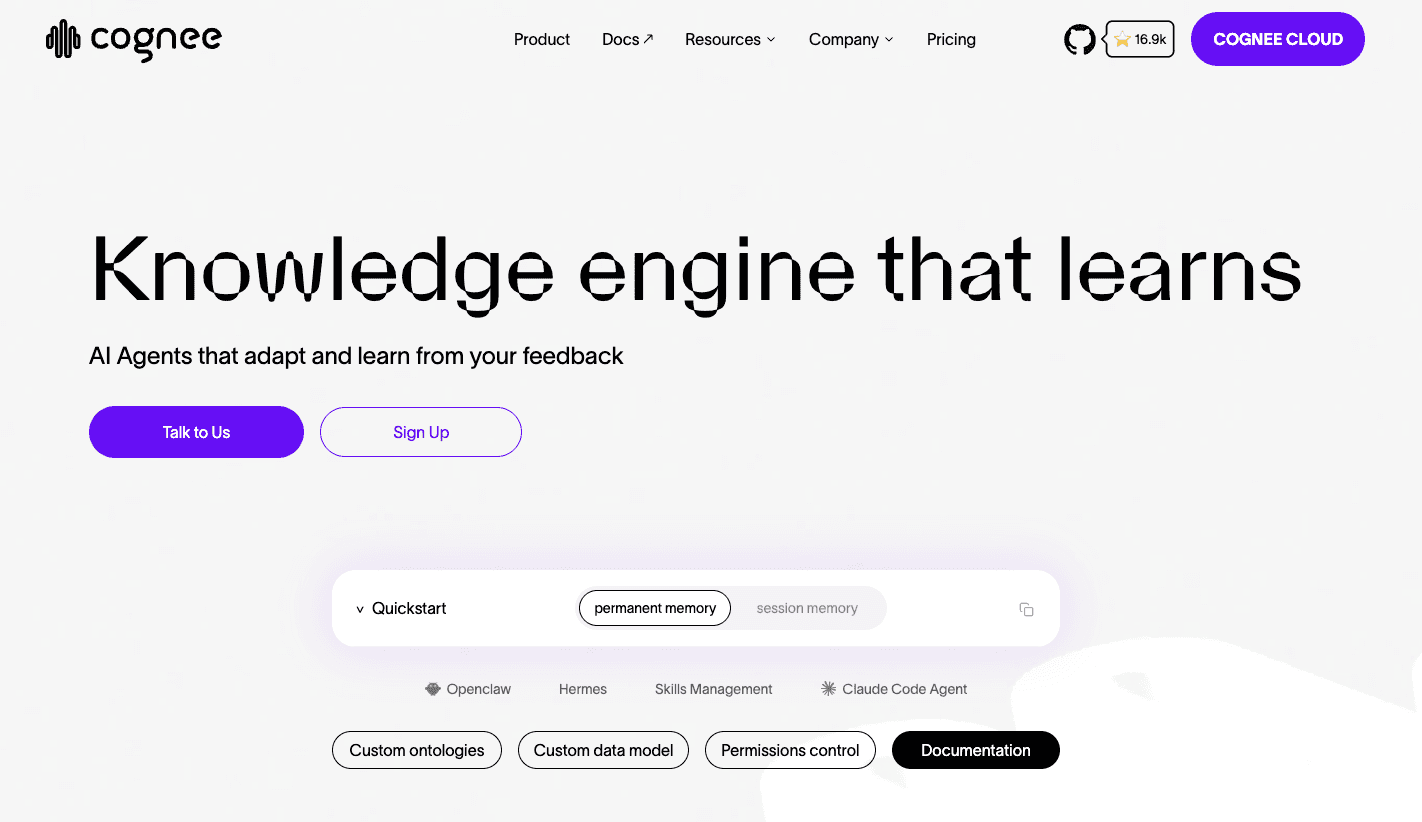

6. Cognee

Cognee is an open-source tool tailored for GraphRAG memory. It helps developers structure unstructured data into graph and vector formats, providing a rigorous memory infrastructure for LLM applications that demand deterministic and highly structured recall.

Key Features

Graph + Vector dual-retrieval system.

Modular pipeline for data ingestion and structuring.

Traceable AI memory paths.

Data lineage and source tracking.

Pros

Highly deterministic retrieval compared to standard semantic search.

Strong focus on data privacy and local execution options.

Great for enterprise knowledge systems requiring high accuracy.

Cons

Requires significant pipeline setup and configuration.

Not a plug-and-play API like some lighter alternatives.

Pricing

Cognee is open-source and completely free to use locally or self-host.

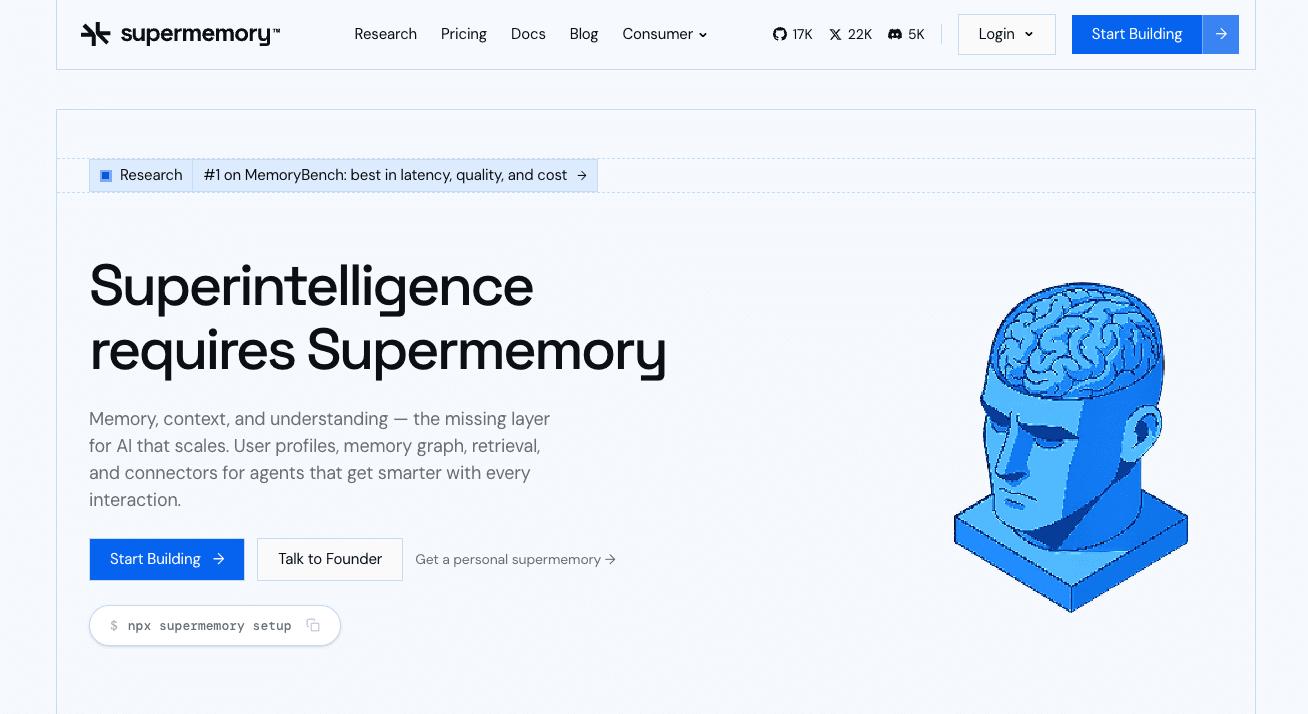

7. Supermemory

Supermemory positions itself more as a "second brain" or personal AI memory bookmarking tool, but it offers APIs that developers can use to give AI agents access to curated knowledge bases. It is best suited for user-facing applications where individuals want to save and interact with their own web clippings and notes.

Key Features

UI-first approach with a web dashboard and browser extensions.

Automated categorization of saved data.

API access for querying saved knowledge.

Built-in markdown and text parsing.

Pros

Incredibly user-friendly for non-technical end-users.

Great for building "personal AI assistant" tools.

Visually intuitive way to manage agent knowledge.

Cons

Not designed as a headless infrastructure layer for B2B multi-agent systems.

Lacks complex entity-relationship tracking or provenance governance.

Pricing

Supermemory offers a basic free tier for personal use. Premium features and expanded API access are available via monthly subscriptions.

8. EverMemOS

EverMemOS is an emerging conceptual framework and toolset that treats memory as an operating system-level service for AI. It focuses on providing a unified state management layer across devices and cloud environments, aiming to synchronize agent states seamlessly.

Key Features

Unified state management abstraction.

Cross-device memory synchronization.

Event-driven memory updates.

Modular storage backends.

Pros

Forward-thinking architecture for decentralized or edge-based agents.

Highly flexible regarding where data is actually stored.

Good for persistent user states across different client applications.

Cons

Relatively new; the ecosystem and community are still growing.

May require significant custom coding to integrate into traditional web apps.

Pricing

Core components are open-source and free, though specific commercial managed services may vary depending on the deployment model.

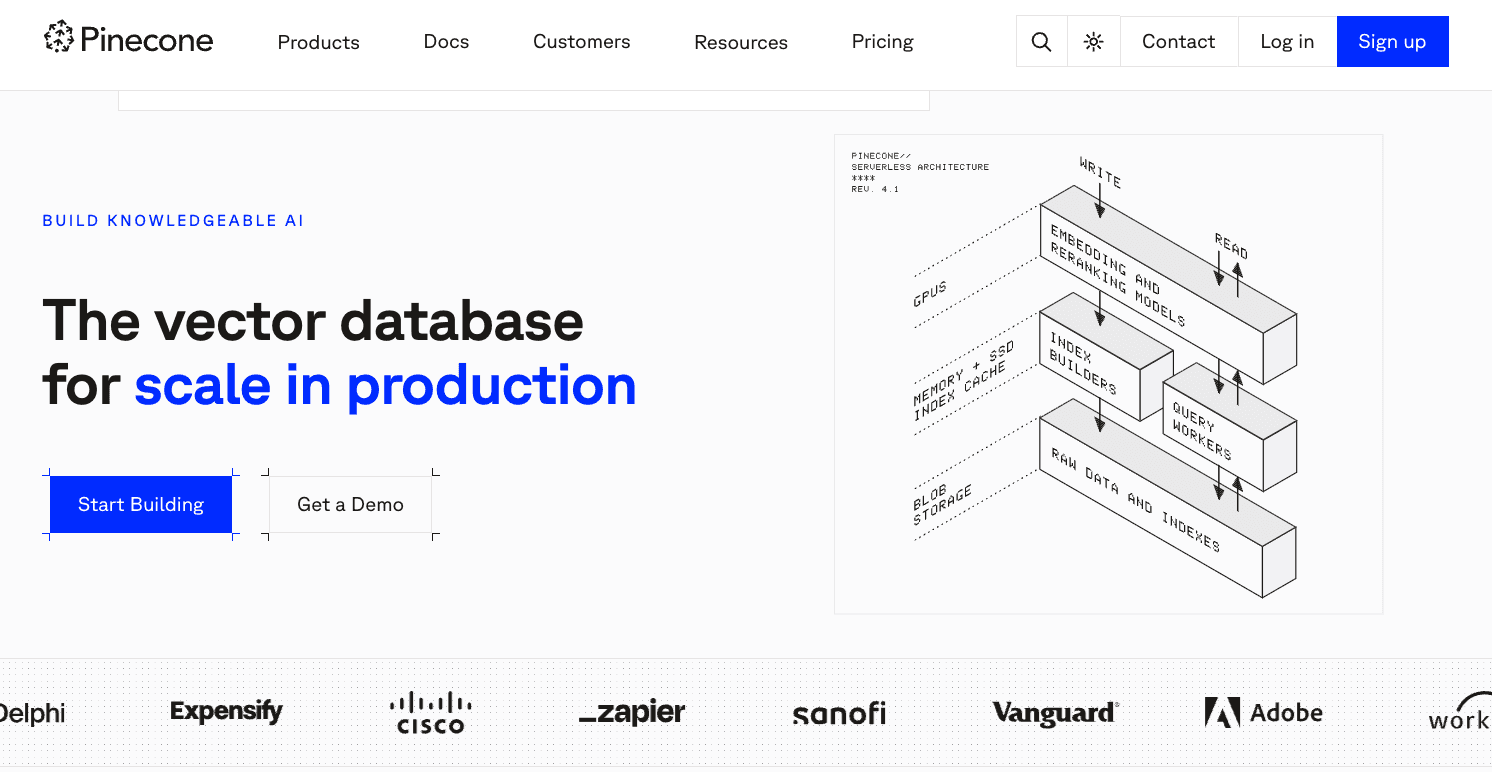

9. Pinecone

Pinecone is not a native "memory layer"—it is a highly popular, managed vector database. We include it here because many developers use it as the foundational building block for AI memory. It excels at similarity search and retrieving chunks of text (RAG), though developers must build the memory logic (like cross-session tracking and entity extraction) themselves.

Key Features

Fully managed, serverless vector search.

Extremely low latency and high throughput.

Metadata filtering for targeted retrieval.

Sparse and dense vector support.

Pros

Industry-standard reliability and performance.

Zero infrastructure management overhead.

Massive ecosystem of integrations (LangChain, LlamaIndex, etc.).

Cons

It is purely a database, not an out-of-the-box memory layer.

No native concepts of "users," "sessions," or "agents"—you must code all CRUD and governance logic.

Pricing

Pinecone offers a generous free tier that includes 1 serverless starter index, which is more than enough for testing and small projects. Beyond that, it relies on usage-based pricing.

10. Qdrant

Like Pinecone, Qdrant is a foundational vector database rather than a turnkey memory application. Written in Rust, it is prized for its high performance and robust open-source nature. Developers building custom memory architectures from scratch often choose Qdrant as their underlying retrieval engine.

Key Features

High-performance vector similarity search.

Rich payload (metadata) filtering.

HNSW algorithm optimized for scale.

Available as open-source, Docker, or managed cloud.

Pros

Incredibly fast and memory-efficient.

Open-source nature prevents vendor lock-in.

Payload filtering is highly advanced, allowing for complex data structures.

Cons

Requires you to build the entire memory application layer (governance, agent logic) on top of it.

Not a plug-and-play solution for cross-session agent continuity.

Pricing

Qdrant is free and open-source for self-hosting. Qdrant Cloud offers a perpetual free tier (a small cluster) for experimentation, with scale-up options based on resource consumption.

Best Memory Layers by Use Case

To help you narrow down the field, here is how the top tools align with specific project requirements:

Best Overall for AI Memory Infrastructure: MemoryLake. If you need a persistent, portable, and governed memory layer that operates across sessions and agents, MemoryLake offers the most complete architecture.

Best for Lightweight Memory APIs: Mem0. Ideal for developers who need to quickly add personalization to a chatbot without deep infrastructure work.

Best for Stateful Agent Workflows: Letta. Perfect for autonomous agents that need to run continuously and manage their own context paging.

Best for Graph-Oriented Memory: Graphiti. The top choice if your application relies heavily on complex entity relationships and logical reasoning.

Best Vector-First Options for Custom Stacks: Qdrant and Pinecone. Use these if you are building your own proprietary memory logic from scratch and just need raw, scalable vector retrieval.

How We Evaluated These Tools

To identify the best free memory layers for AI agents, we looked beyond the basic "vector search" capabilities and evaluated these platforms across the following critical dimensions:

Persistence & Cross-Session Continuity: Can the tool maintain long-term context across different sessions and interactions?

Agent Fit & Portability: Is the memory bound to a single framework, or can it be shared across multiple agents, tools, and LLMs?

Governance & Provenance: Does the tool allow developers to trace where a memory came from, update it, or delete it for compliance?

Retrieval Logic: Does it rely on brute-force prompt stuffing, or does it offer selective, intelligent recall?

Developer Experience & Pricing: Is there a realistic free tier or open-source version for developers to build and test before scaling?

What to Look for in a Memory Layer for AI Agents

When evaluating a memory layer, buyer criteria should extend beyond basic vector search speeds. Look for:

Persistence Beyond Sessions: The tool must seamlessly remember a user from a conversation they had last week, without requiring you to manually pass giant context payloads.

Retrieval vs. Memory Distinction: Raw RAG retrieves documents. A true memory layer recalls facts, preferences, and state. Ensure the tool supports updating and evolving facts, not just searching static text.

Governance and Provenance: In production, you need to know why an agent remembers something and have the ability to delete or modify that memory for privacy compliance (e.g., GDPR).

Developer Ergonomics: Look for clear APIs, SDKs, and a logical abstraction layer that prevents your code from becoming a tangled mess of database queries.

Portability: Your memory infrastructure shouldn't lock you into a single LLM or a specific agent framework. It should act as a portable knowledge graph.

Conclusion

Building autonomous AI applications in 2026 requires moving past the limitations of the context window. While vector databases and basic chat histories were enough for the first wave of LLM apps, modern multi-agent systems demand a dedicated, intelligent state management solution.

As our comparison shows, the right choice depends heavily on your use case. If you are building a simple chatbot, API-driven tools like Mem0 or Zep will get you off the ground quickly. If you are experimenting with stateful runtimes, Letta provides a fascinating OS-like approach. And if you are rolling your own architecture, vector databases like Qdrant and Pinecone remain indispensable.

However, if your team has outgrown basic session memory and needs a robust foundation, MemoryLake is worth serious evaluation. For teams requiring continuity, portability across models, and governed reuse of knowledge, MemoryLake stands out as a true infrastructure layer. It frees developers from building database scaffolding, allowing them to focus on what matters most: building intelligent, context-aware AI agents.

Frequently Asked Questions

What is a memory layer for AI agents?

A memory layer is a dedicated infrastructure component that allows AI agents to persist, manage, and recall state, user preferences, and contextual history across multiple independent sessions.

Is RAG the same as an AI memory layer?

No. RAG (Retrieval-Augmented Generation) is typically used to fetch static external knowledge (like PDF documents) to answer questions. A memory layer is dynamic; it continuously learns, updates, and tracks the state of the user and the agent over time.

What is the difference between a vector database and a memory layer?

A vector database (like Pinecone or Qdrant) is a storage and search engine for embeddings. A memory layer (like MemoryLake) sits on top of or alongside databases, providing the business logic for users, sessions, governance, entity extraction, and cross-agent portability.

What is the best free memory layer for AI agents in 2026?

For developers needing a full-scale infrastructure approach, MemoryLake offers a strong free tier. For lightweight chat APIs, Mem0 and Zep are excellent free and open-source options.

Which memory layer is best for multi-agent systems?

MemoryLake is highly suited for multi-agent systems because it provides a portable, governed "memory passport" that different agents can access, update, and share securely.

Are there open-source memory layers for AI agents?

Yes. Tools like Mem0, Letta, Graphiti, and Zep offer robust open-source versions that developers can self-host entirely for free.

When should you choose MemoryLake over a lighter memory tool?

You should evaluate MemoryLake when your application scales beyond simple session-bound chats. If you require cross-session continuity, strict memory governance, provenance tracking, and the ability to share memory seamlessly across different LLMs and agents, a lighter tool will likely fall short.